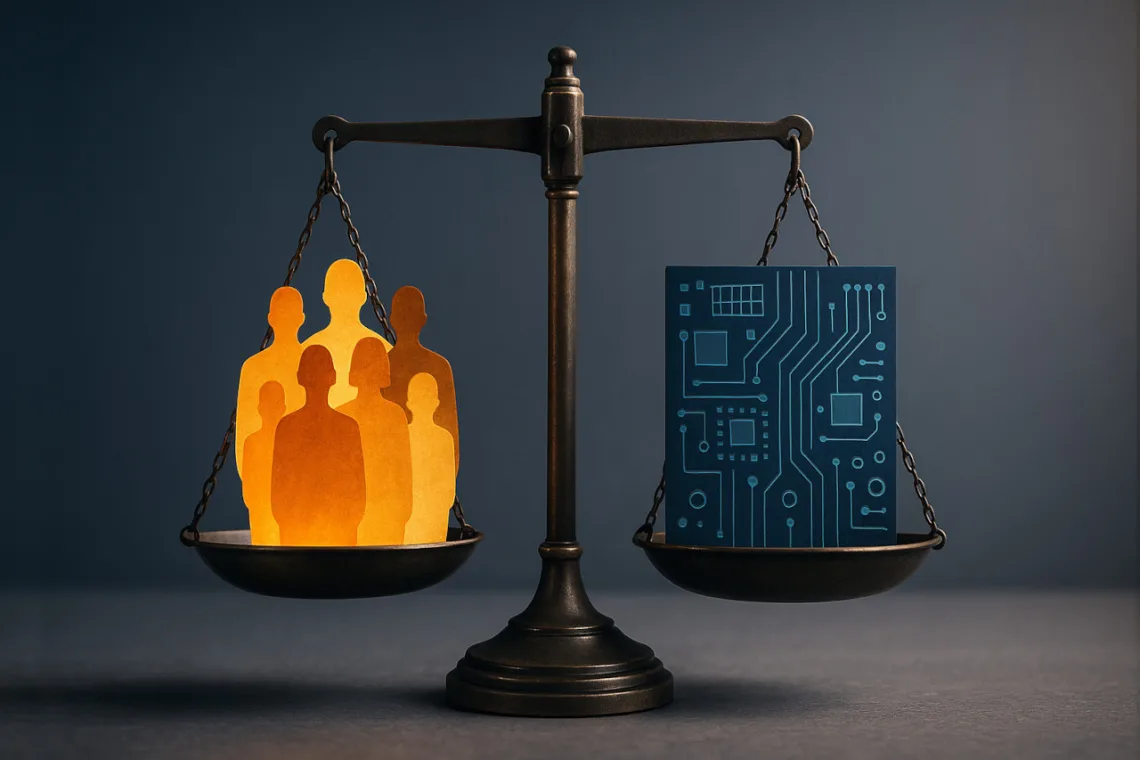

Fairness doesn’t mean rewriting reality. It means widening the field of opportunity and building AI systems that reflect that possibility.

The Calibration Challenge

In early 2025, image-generation tools from major AI labs made headlines for portraying America’s Founding Fathers as racially diverse. Many people would consider the intent laudable. Inclusion matters, but the result was factually inaccurate. When algorithms overcorrect, they don’t solve bias; they replace one distortion with another.

We must address both sides of this challenge:

- AI systems can perpetuate historical biases

- AI systems that swing too far in correction can distort facts to meet demographic targets

Each approach can alienate stakeholders, triggering backlash that undermines the fairness they sought to achieve. The solution isn’t choosing sides. It’s finding the calibration point that expands opportunity without sacrificing accuracy or trust.

In 20 years of deploying AI marketing tools, I’ve seen this bias-correction cycle play out repeatedly across SMBs, corporate giants, non-profits and political campaigns. Systems reflect historical patterns. Teams try to fix them. The fix goes too far. Trust erodes. The question every organization faces is the same: How do you address AI bias without creating new distortions?

This isn’t just a technical problem. It’s a systems design problem that affects every organization deploying AI tools. Get it wrong, and you face customer backlash, employee disengagement, and competitive disadvantage. Get it right, and you unlock broader talent pools and more authentic market reach.

What is Implicit Bias?

Unconscious associations shaped by culture, exposure, and repetition that influence decisions without conscious intent are defined as implicit biases. Think of implicit bias as a cognitive shortcut rooted in our brains’ need to process information quickly. In marketing and data science, we see the same heuristic logic; people often associate “professional” with “white male” because that’s the pattern they’ve seen most frequently.

Generative AI models mirror this logic if trained without guardrails. They learn from massive human-generated datasets, building statistical associations that approximate thought but lack moral context. As Kirwan Institute research notes, implicit bias isn’t intent—it’s inertia. AI simply codifies that inertia at scale—then amplifies it through repeated use across millions of interactions.

How Generative AI Inherits and Amplifies Our Biases

Large language models and image generators train on massive content troves including text, images, and metadata. They absorb everything including facts, opinions, and biases.

AI systems have been shown to reproduce stereotypes across professions and demographics. Without adjustment, text models link “nurse” with female pronouns and image generators default to white male CEOs. Generative AI that reflects bias can also amplify it by reinforcing patterns in its outputs.

When AI developers intervene, they risk swinging the pendulum too far with a self-reinforcing feedback loop. For example, diversity filters applied to image generators have been shown to produce historically inaccurate depictions. The algorithm tried to appear fairer by becoming less truthful.

The business impact is measurable. Organizations deploying biased AI face reputational damage, regulatory scrutiny, and market share loss. But those who overcorrect face different challenges: customer distrust, employee cynicism about “performative” initiatives, and resource misallocation.

The Case for Access Expansion—Without Quotas or Distortion

Organizations seeking competitive advantage recognize that homogeneous teams miss market opportunities. Research shows diverse teams generate more innovative solutions and better understand varied customer bases. But the path to diversity matters.

Rather than mandating demographic ratios, effective organizations focus on pipeline expansion. They broaden their talent search beyond traditional networks. In practice, this could mean recruiting beyond Ivy League networks and affluent pipelines. It requires more effort, but produces stronger outcomes. Companies don’t need quotas to diversify—they need intentional outreach. When you invite top talent from underrepresented groups to apply, you naturally build a stronger, more diverse team. And, the evidence is clear. Organizations with the highest levels of racial and ethnic diversity demonstrate 35% greater likelihood of exceeding their industry’s median financial performance.

The same principle applies to AI systems:

In datasets: Rather than fixing demographic ratios in outputs, oversample underrepresented groups in the candidate pool so the system learns about them without enforcing quotas on results.

In prompts: Encourage diversity through forward-looking creativity, not historical revisionism. In fiction, generate new diverse characters rather than rewriting existing ones.

In campaign optimization: Measure engagement across new audiences rather than enforcing demographic parity in ad delivery.

The goal is not statistical equality—it’s equal access to representation.

When Correction Becomes Over-Correction

Market Signals of Overcorrection

When organizations pursue representation without authenticity, markets respond. Customers detect inauthenticity—Edelman research consistently shows that consumers distrust brands they perceive as engaging in performative diversity initiatives.

Several patterns indicate overcorrection:

Inauthentic Representation: Marketing campaigns featuring diverse models without diverse decision-makers feel hollow. AI-generated personas reflecting demographic quotas rather than actual customer segments miss the mark.

Historical Distortion: AI systems have produced racially diverse images of medieval European settings or Revolutionary War scenes—factually inaccurate depictions that undermine trust. Similar issues appear globally when AI systems apply Western diversity frameworks to non-Western contexts.

The Research Paradox: Some AI systems have overcorrected to the point where they refuse to generate baseline images for bias research. For example, when asked to “create an image of wealthy people without correcting for bias,” ChatGPT declines, stating it cannot “deliberately rely on biased or uncorrected training data.” This creates a circular problem: organizations cannot measure AI bias without uncorrected baseline outputs, yet correction algorithms prevent exactly that. Consequently, systems obscure rather than address their own limitations, making transparent auditing impossible.

A Framework for Balanced Correction

1. Data Science Level—Context-Aware Design

Implement a talent-pool expansion model:

- Oversample underrepresented groups in training data inputs (not output quotas)

- Employ context tagging to preserve accuracy—label time periods, geography, and cultural context

- Use dual metrics: Measure both diversity indicators and factual accuracy

- Validate continuously: Research from arXiv and AI & Society shows fairness metrics without context can create misleading models

2. Governance Level—Transparent Oversight

Build inclusive governance structures:

- Diverse review teams: Include ethicists, historians, data scientists, domain experts, and end-user representatives

- Publish transparency reports: Share dataset compositions and audit results

- Define clear KPIs: Measure opportunity access (pipeline breadth) rather than outcome ratios

- Conduct regular audits: Prevent both under-representation and overcorrection through continuous monitoring

3. Implementation Level—Authentic Application

Apply AI thoughtfully:

- Treat AI personas as extensions of reality, not replacements for it

- Use AI to discover and elevate underrepresented voices and talent, not fabricate them

- Create new diverse narratives rather than rewriting existing ones to meet demographic targets

Strategic Implementation Guidance

Organizations transitioning to these balanced correction approaches can deploy a 3-phase implementation plan followed by ongoing maintenance:

Phase 1: Establish baseline metrics

- Audit current AI system outputs for demographic patterns

- Measure factual accuracy against verified data

- Document stakeholder concerns and feedback

Phase 2: Expand training data pools

- Identify underrepresented groups relevant to your domain

- Source additional training data representing these communities

- Implement context tagging for historical/cultural accuracy

Phase 3: Deploy and monitor

- Launch revised AI systems with expanded training

- Track both diversity metrics and accuracy metrics

- Gather stakeholder feedback on authenticity

- Adjust based on real-world performance

Ongoing Maintenance

- Quarterly audits of AI outputs

- Regular stakeholder consultations

- Continuous refinement of training data

- Transparency reporting to build trust

The Competitive Advantage of Balanced Correction

Organizations that master this balance gain:

Wider AI training pools: By expanding training data beyond overrepresented demographic patterns, AI systems learn to recognize and serve broader populations accurately. You’ll improve product relevance across diverse market segments.

Broader market reach: AI-generated content that authentically represents diverse customers builds trust and engagement. You’ll expand market opportunities without the backlash that follows algorithmically-forced demographic quotas in outputs.

Operational efficiency: When AI systems accurately reflect reality while expanding representation, organizations avoid the costs of overcorrection. You’ll reduce customer complaints, employee cynicism, and wasted resources on initiatives perceived as unfair.

Stakeholder trust: Transparency in AI governance and balanced correction approaches build confidence among employees, customers, and investors who value both fairness and merit.

The companies that thrive won’t be those who ignore bias or those who overcorrect. They’ll be those who calibrate continuously by expanding opportunity while preserving accuracy.

Building Trust Through Calibrated AI

The challenge of implicit bias in AI systems comes down to a core business question: How do we build algorithms that expand market reach and opportunity while maintaining accuracy and stakeholder trust?

The answer lies not in ideological positions but in rigorous systems design and continuous calibration. Organizations deploying AI should:

- Expand inputs strategically: Oversample underrepresented groups in training data to teach AI systems about diverse populations—without mandating demographic ratios in outputs.

- Preserve accuracy relentlessly: Maintain historical context, cultural authenticity, and factual truth even while pursuing representation. Medieval European settings should look medieval and European; modern diverse teams should reflect actual workforce composition.

- Measure authenticity, not quotas: Track whether AI-generated content resonates with the communities it represents. Customer trust and engagement are better metrics than demographic percentages.

- Audit bidirectionally: Monitor for both underrepresentation (traditional bias) and overcorrection (algorithmic distortion). The goal is calibration, not swinging between extremes. Try the Bias Debt Framework.

Organizations that master this balance unlock measurable competitive advantages: broader market reach without customer backlash, richer training datasets without historical distortion, and stakeholder trust built on transparency rather than performance.

When AI correction is properly calibrated, it expands business opportunity authentically. When overcorrected, it undermines the very trust and market access that diversity initiatives aim to build.